Hi there!

Welcome! My name is Fernando Cejas, I’m an IT Nerd and this site is my open book where I express myself and share my ideas, experience and knowledge. I hope you find the material and content useful. Any feedback is always more than welcome. Enjoy your stay!

“If someone is in need, lend them a helping hand. Do not wait for a thank you.”

Featured Posts

Rust cross-platform... The Android part...

In this journey, we will go through the process, infrastructure and architecture on how we can integrate Rust in our Android Project in a cross-platform development way. Topics like JNI and NDK are also covered, plus some anti-patterns and real use cases/scenarios. Continue reading Rust cross-platform... The Android part...

An over-engineered Home Lab with Docker and Kubernetes.

Setting up a personal Home Lab is not a task for lazy people: it incurrs a big cost of maintenance and issues arise even though we might use the best IT-Infrastructure practices… but we can always learn a lot out of it and make it also super fun. In this post I would like to share my journey and share some tips and tricks and issues I bumped into. Let’s jump in! Continue reading An over-engineered Home Lab with Docker and Kubernetes.

Cooking Effective Code Reviews.

Reviewing code (PRs) is not an easy task, so in this post I will share tips and tricks on how your code reviews can better contribute to code quality, be more effective and increase team morale by following a structured and organized process. Let’s jump in! Continue reading Cooking Effective Code Reviews.

Writing First-Class Features: BDD and Gherkin.

As our product evolves, there is the need to adopt a common vocabulary, interpreted by all the moving parts of our organization: business users, analysts, managers, engineers, etc. A technique like BDD and the Gherkin language can help us to achieve this goal. Continue reading Writing First-Class Features: BDD and Gherkin.

Technical Debt... GURU LEVEL UNLOCKED!

As Software Engineers we know that Technical Debt and Legacy Code are familiar concepts we have to live with. Code healthiness and maintenance are challenging, so let’s dive into tips and techniques on how to effectively address this problem. Continue reading Technical Debt... GURU LEVEL UNLOCKED!

It is about Philosophy... Organization's Culture and the Power of Humanity.

Even though technical skills are a very positive thing, other qualifications are really more important, features like respect, honesty or humility are required not only to become a better person but also to create culture around your organization based on human values. Continue reading It is about Philosophy... Organization's Culture and the Power of Humanity.

Learn out of mistakes: Postmortems to the rescue!

Postmortems are a valuable tool for learning out of mistakes. They provide useful conclusions and should be included in retrospectives for further discussion in order to not fall into the same trap again. Continue reading Learn out of mistakes: Postmortems to the rescue!

Architecting Android...Reloaded.

Android Architecture has been evolving over the years and we need to adapt to the current times. Here we will dive into Functional Programming, OOP, Error Handling, Modularization and Patterns for the Android Platform, and everything written in Kotlin. Continue reading Architecting Android...Reloaded.

See Blog for more

Featured Projects

SoundCloud

Part of Core Engineering and Mobile Team. Lots of technical challenges in terms of scalability and complexity since the platform counts with millions of users. Continue reading SoundCloud

Tignum

Joined as Head of Engineering. I encounter tons of challenges…from a fully demotivated team to an over-engineered legacy system, which implies HIGH EXPECTATIONS from us when it comes to problem solving. Continue reading Tignum

Living By The Code

I have always wanted to write a book and I know how much effort is required. Unfortunately at this point in time, I have not done it yet, but I participated in one as part of a combined effort with other IT professionals. Continue reading Living By The Code

See Projects for more

Featured Tech Talks

Congratulations! Legacy code GURU level unlocked!

We know that Technical Debt and Legacy Code are familiar concepts we have to live with in our day to day life, so let’s take a quick journey on tips and techniques about addressing effectively this problem. Continue reading Congratulations! Legacy code GURU level unlocked!

What Mom Never Told You About Multi threading.

In this tech talk, we are going to explore different alternatives we have to handle, manage and master multi-threading on mobile platforms. We will focus mainly on Android but all the knowledge acquired here, can be perfectly applied to any software project. Continue reading What Mom Never Told You About Multi threading.

The Art of Coding Disasters and Failures.

Software engineering and technology is about constant evolution* and **continuous improvement. In order to achieve this, we have a long path ahead of us, which many times is not easy to follow. Let’s jump together on this journey about lessons learned. Continue reading The Art of Coding Disasters and Failures.

See Tech Talks for more

Featured Postmortems

Modular Monolith: DB Schema Migration failure led to an outage.

LESSON LEARNED: Plan upfront any DB Schema Migration that includes Data Manipulation. Continue reading Modular Monolith: DB Schema Migration failure led to an outage.

Kubernetes Node tainted, blocked Pods triggering a 503 (Service Unavailable).

LESSON LEARNED: Setup proper alerting and use the best monitoring practices in order to act rapidly in case of an issue/outage/problem. Continue reading Kubernetes Node tainted, blocked Pods triggering a 503 (Service Unavailable).

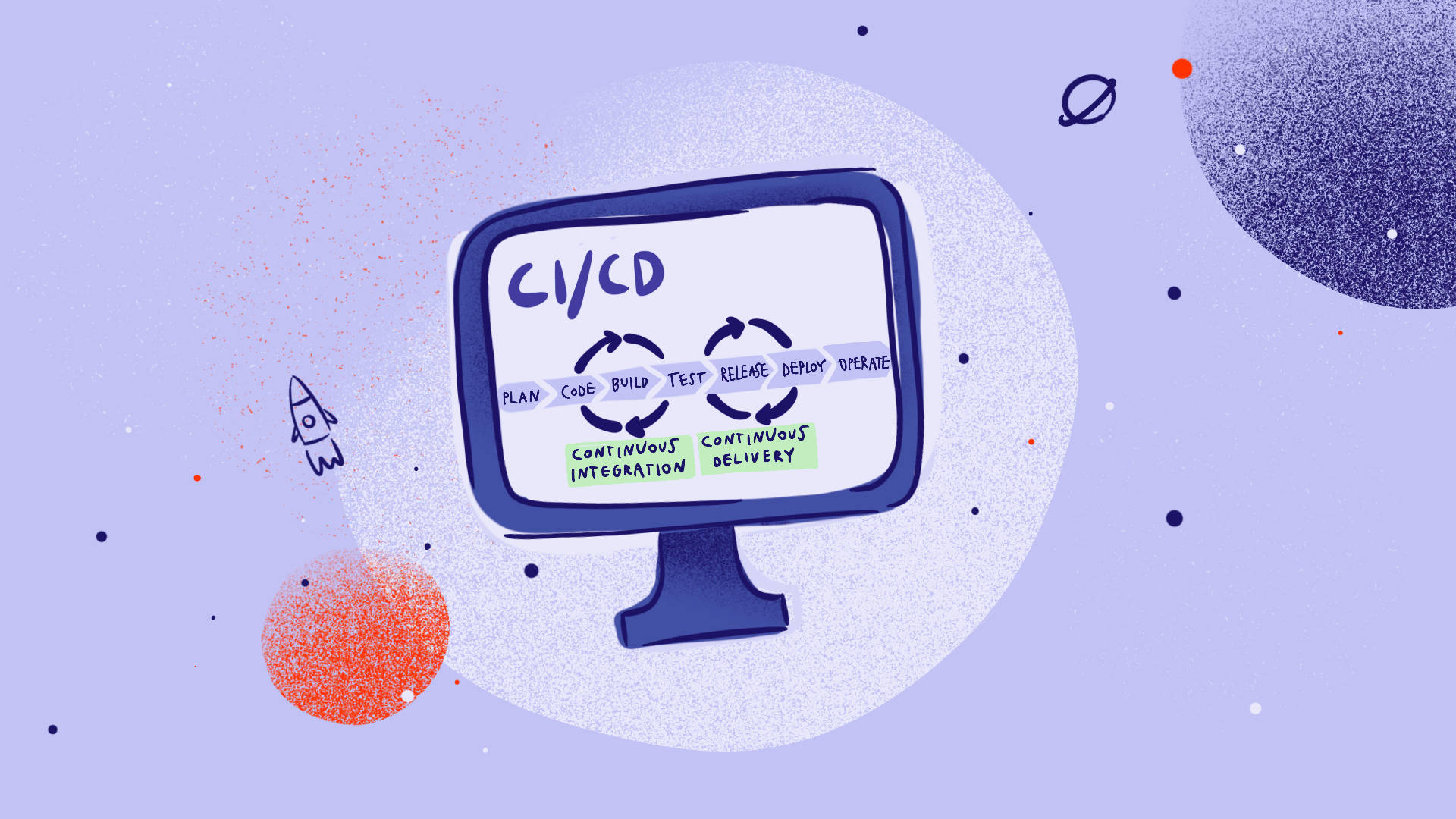

iOS CI Broken due to Personal Accounts used to build the client.

LESSON LEARNED: Personal private accounts should never be used in order to avoid coupling outside the Organization we are working for. Continue reading iOS CI Broken due to Personal Accounts used to build the client.

See Postmortems for more

Who am I?

Fernando works as Head of Engineering at @Tignum. Part of his past includes: Director of Mobile at @Wire, Core Engineering at @SoundCloud, Developer Relationships at @IBM and different roles in the start-up scene.

He describes himself as a curious learner, FOSS Advocate and a nerdy geek. Hobbies and Passions? Leadership, Software Engineering, Quantum Computing and Science. Other things? Amateur (frustrated) cyclist.